This blog post will go over the process behind building the COVID exposure site map for the Australian state of Victoria. The source code is located at benkaiser/covid-vic-exposure-map. This tool was built back in May and is still serving hundreds of viewers every day.

Why Build a Map?

The short answer is, because the Victorian government didn't. They expected citizens to scroll through a list of hundreds of exposure sites all across the state to work out if they were potentially exposed to COVID. Since creating the tool, a map has now been provided by Vic Gov DHHS and is available on the official exposure site page.

Building Process

Grabbing the Data

When looking at the official exposure site page, opening up the network tab of the develeoper tools shows that it is making a request to https://www.coronavirus.vic.gov.au/sdp-ckan?resource_id=afb52611-6061-4a2b-9110-74c920bede77&limit=10000 . Inspecting the response shows that this is returning all of the exposure sites, and with a limit=10000 we can assume it will return all of them (praying that there are no more than 10k sites that is...).

Geocoding The Data

Once we have the list of sites, the next step is to convert the street address/suburb into a latitude and longitude position for placement on a map. For this, I ended up using the Azure Maps Service since Microsoft provides their employees with some free Azure credits each month. The accuracy of the geocoding isn't always perfect, either due to Azure Maps picking the wrong spot, or the input data being bad. In those cases, a manual overides.json file is created by me to fix those few inaccurate locations.

Caching the Geocoded Data

To ensure I don't rack up a huge Azure bill, on each subsequent refresh of the data, it is first overlayed with all the previously fetched locations. This way we are only fetching fresh latitude/longitudes for locations that are new or have changed. One potential improvement on top of this approach would be to store all past geocoding requests and their lat/lon in a cache file that is append-only. That way, if DHHS removes an exposure site and re-adds it, then the scraper won't refetch those location coordinates again.

Automatically Pulling New Exposure Sites

Since the code is hosted as a static site on Github Pages (for free!), the website can be updated by updating the repository itself. The easiest way to do this in an automated fashion is to create a Github Action (also free!) that runs a script in the repo on a regular interval. The configuration for this action is in scraper.yml, with it set to run every hour, which GitHub is quite consistent at delivering.

Mapping the Exposure Sites

Once we have all the sites geocoded, the last step is to build an index.html page for the site that combines it all. This page:

- Pulls in the data generated by the scraper

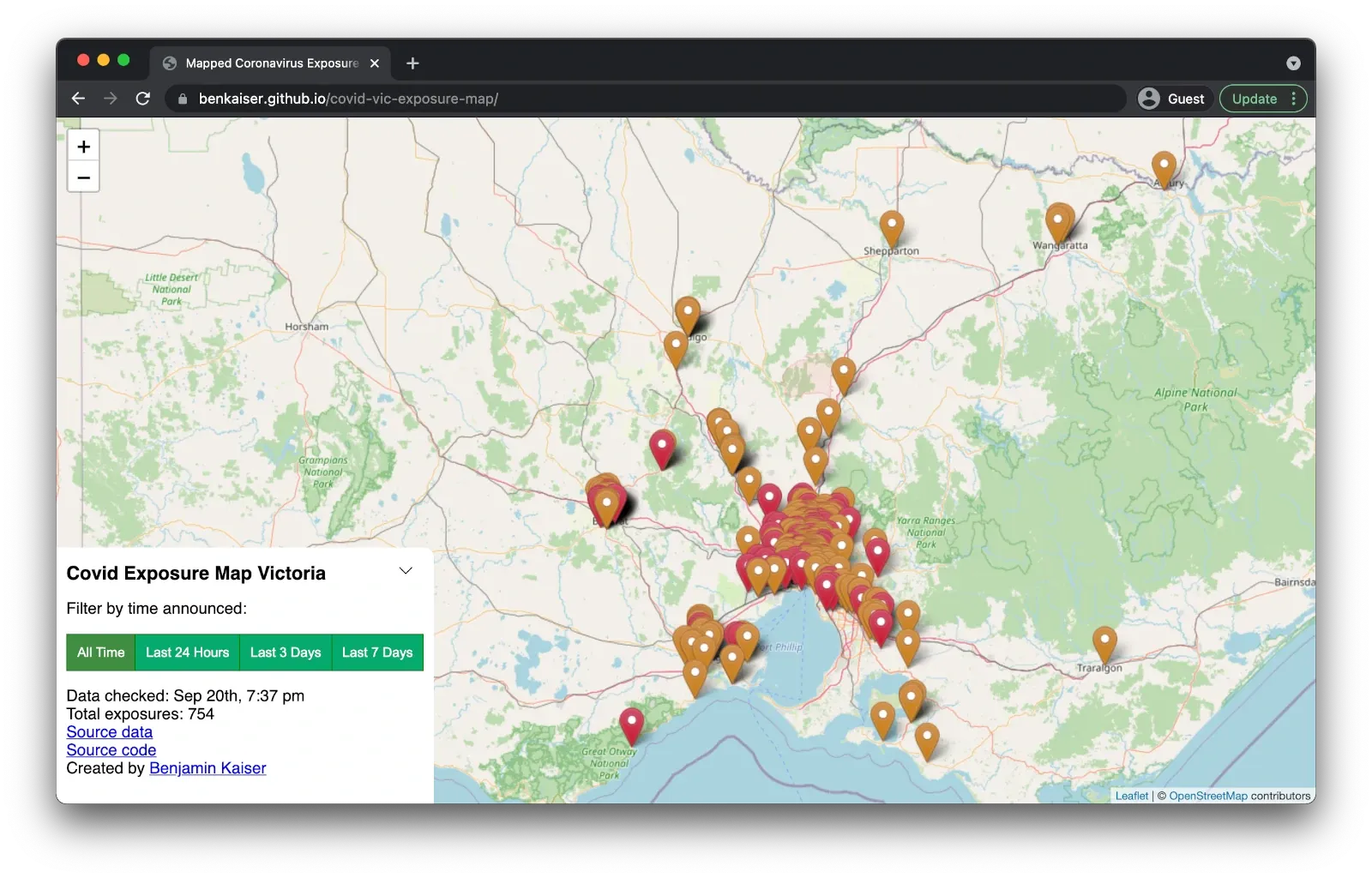

- Maps the exposure sites using leaflet.js / openstreetmap

- Provides basic overview information such as last scrape time and how many exposure sites there are in total

After doing the initial release and seeing a lot of feedback from the community, I decided to add some buttons to let users quickly filter the exposure sites reported to the last 1/3/7 days. This is useful for users that use the tool on a daily basis and just want to get an idea of what has changed recently.

End Result

This is what the map looks like today, with several hundred exposure sites across Victoria all mapped out.

If you have any questions about the building process, drop a comment below!